Key Takeaway

Generative AI (ChatGPT, Midjourney) creates content from prompts. Agentic AI goes further: it plans, uses tools, makes decisions, and executes multi-step workflows. Generative AI is a feature you add to products. Agentic AI is an architecture that changes how products work. The distinction matters for hiring, budgeting, and product roadmaps.

Every product leader we talk to is evaluating AI. Most of them are asking the wrong question.

The wrong question: “Should we use AI in our product?”

The right question: “What kind of AI system does our use case actually require?”

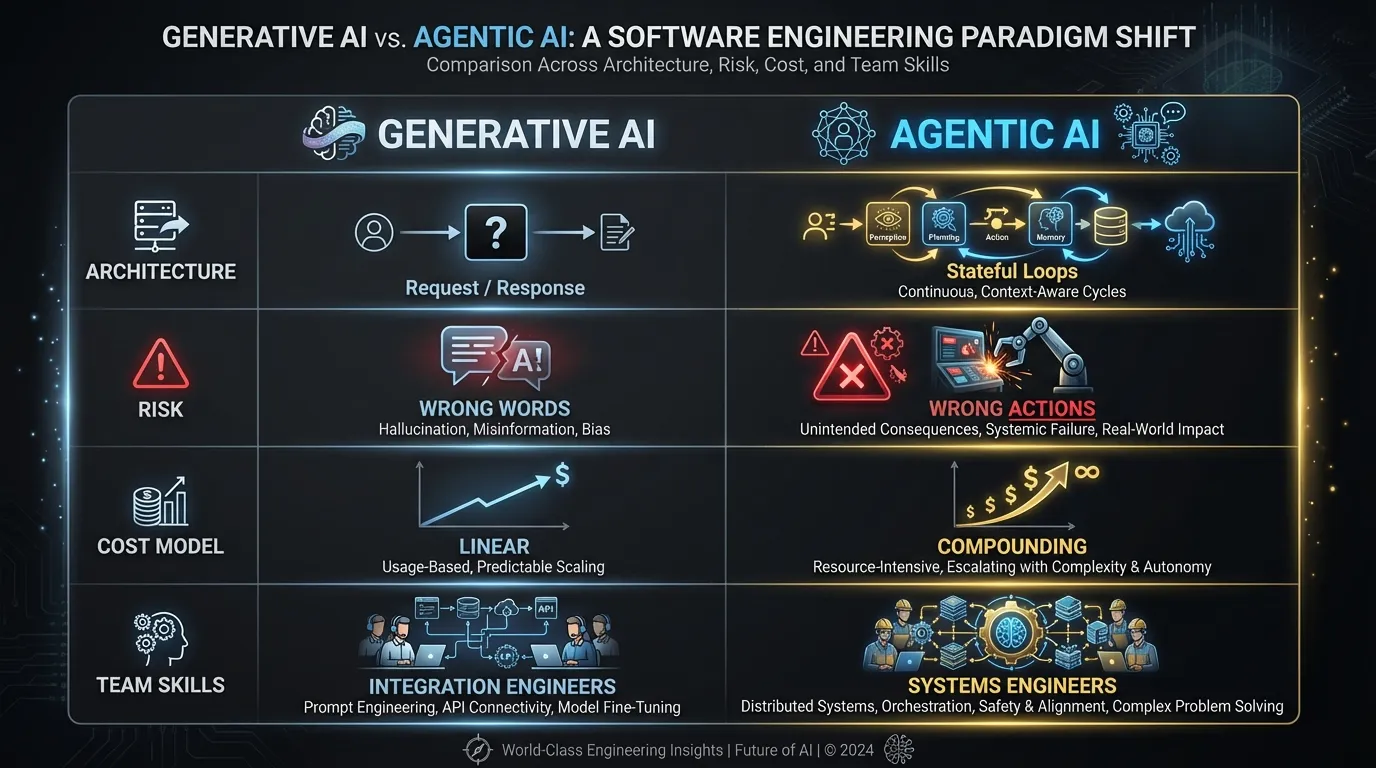

That distinction between generative AI and agentic AI determines your architecture, your cost model, your risk profile, and what kind of engineers you need to hire. Getting it wrong doesn’t just waste budget. It creates the wrong product.

The Short Version

Both generative AI and agentic AI use large language models. The difference is what the system does with the output.

Generative AI produces content. You give it a prompt, it returns text, an image, code, a summary. One request, one response. The system doesn’t remember what it did before, doesn’t make decisions about what to do next, and doesn’t interact with anything outside the model.

Agentic AI takes action. It receives a goal, breaks it into steps, decides which tools to call, executes those steps, evaluates the results, and adjusts. It maintains state across interactions. It operates in loops, not lines.

If generative AI is autocomplete on steroids, agentic AI is a junior employee who can follow a runbook. Both are useful. They solve different problems. And they carry very different implications for the teams building them.

For a deeper look at what makes AI systems agentic, including the spectrum from simple tool-calling to fully autonomous agents, see our full guide on what agentic AI is and how it works.

Where the Differences Actually Matter

The definitions are table stakes. What matters is how these differences cascade through every product decision you make.

Architecture: Request/Response vs. Stateful Loops

A generative AI feature is architecturally simple. User sends input. Model returns output. You might add retrieval-augmented generation (RAG) to ground the response in your data, or fine-tune a model for your domain. But the interaction pattern is stateless: request in, response out.

An agentic system is a different animal. The model calls tools, those tools return results, the model decides what to do next based on those results, and the cycle repeats. You need a state machine or orchestration framework to manage execution. You need a tool registry. You need to define what the agent is allowed to do, what it’s not allowed to do, and what happens when a step fails midway through a five-step workflow.

The practical difference: a generative feature can be a single API call wrapped in your existing backend. An agentic feature requires its own execution layer. If your team hasn’t built distributed systems before, this is a significant step up in complexity.

Risk: Wrong Words vs. Wrong Actions

When a generative AI system fails, it produces bad text. A hallucinated answer, a factually wrong summary, a bizarre code suggestion. These failures are visible and recoverable. A human reads the output, catches the error, and moves on. The blast radius is small.

When an agentic AI system fails, it takes wrong actions. It sends an email to the wrong customer. It deletes records from a production database. It triggers a payment that shouldn’t have been triggered. It completes four out of five steps correctly, then makes an irreversible mistake on the fifth.

This is the risk profile difference that product leaders underestimate most. Generative AI needs good prompts and evaluation. Agentic AI needs guardrails, sandboxing, human-in-the-loop checkpoints, and rollback mechanisms. The failure mode isn’t “the output was wrong.” It’s “the system did something wrong.”

A hallucinated paragraph is embarrassing. A hallucinated action can be costly.

Cost: Linear vs. Compounding

Generative AI costs are linear and predictable. Each API call consumes a known number of tokens. You can estimate cost per request with reasonable accuracy.

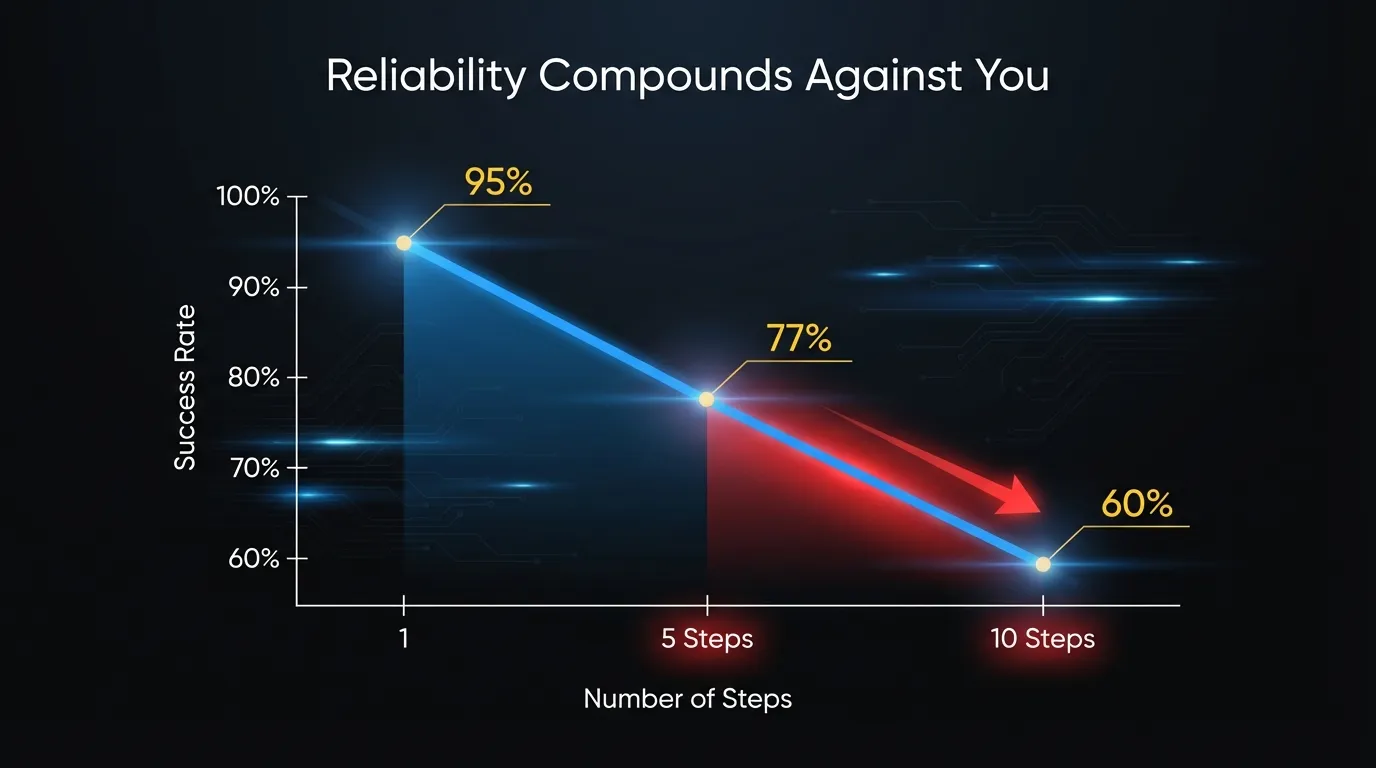

Agentic AI costs compound. Each step in an agent’s execution loop consumes tokens. The model reads the results of the previous step, decides what to do next, generates a tool call, reads the result of that tool call, and continues. A five-step agent loop might consume 5x to 15x the tokens of a single generative call, depending on context window usage.

Here’s the math that catches teams off guard: reliability per step compounds too, but in the wrong direction. If each step in an agentic workflow succeeds 95% of the time, a five-step workflow succeeds 0.95^5 = 77% of the time. A ten-step workflow drops to 60%. That means retries, which means more token consumption, which means higher cost and less predictable latency.

The cost model for agentic AI isn’t “price per call.” It’s “price per successful task completion,” and it includes the cost of failures.

Team: Different Engineers, Different Skills

A generative AI feature can often be built by a single senior engineer who understands prompt engineering, evaluation, and your LLM provider’s API. The integration work is mostly plumbing: connect the API, handle the response, display it to the user.

An agentic AI system requires systems engineering. You need people who understand state machines, error recovery, distributed system failure modes, and concurrency. You need someone who can reason about what happens when step three of a seven-step workflow throws an unexpected error. Do you retry? Roll back? Skip and continue? Alert a human?

The skills gap is not about AI knowledge. It’s about building reliable systems that operate autonomously. Prompt engineering is maybe 20% of the challenge. The other 80% is orchestration, observability, failure handling, and testing.

If you’re staffing a team for generative AI features, you need engineers who are good at integration and evaluation. If you’re staffing for agentic AI, you need engineers who have built systems that run without human supervision. That’s a different hiring profile.

When Generative AI Is the Right Call

Generative AI fits use cases where the value is in producing content, transforming data, or surfacing information.

Content generation. Draft emails, marketing copy, support responses, documentation. The human reviews and edits the output. The system produces; the human decides.

Summarization and extraction. Condense long documents, extract structured data from unstructured text, generate meeting notes. The value is in reducing manual reading and data entry.

Search and retrieval. Natural language queries over your data. Conversational interfaces that help users find what they need without learning query syntax. RAG-based systems that combine retrieval with generation.

Personalization. Dynamic product descriptions, customized onboarding flows, tailored recommendations with generated explanations. The model adapts content to the user’s context.

The common thread: the system’s output goes to a human who evaluates it. The model creates. The human acts.

When Agentic AI Is the Right Call

Agentic AI fits use cases where the value is in completing multi-step tasks that previously required a human to coordinate.

Workflow automation with judgment. Processing insurance claims, triaging support tickets, qualifying sales leads. These aren’t simple if/then rules. They require reading context, making decisions, and routing to the right next step.

Multi-system orchestration. Tasks that span multiple tools or APIs. Updating a CRM record, then creating a task in a project management tool, then sending a notification, all based on a trigger event. The agent replaces the human who used to alt-tab between six applications.

Research and analysis. Gathering information from multiple sources, synthesizing findings, producing a structured report. The agent does the legwork; the human reviews the conclusion.

Autonomous operations. Monitoring systems, identifying anomalies, executing remediation playbooks. Infrastructure management, data pipeline monitoring, incident response triage. These are environments where waiting for a human to act is too slow.

The common thread: the system needs to take multiple steps, make intermediate decisions, and interact with external systems. The model acts. The human supervises.

The Hybrid Reality

Most production AI systems don’t fit neatly into one category. They use both.

An agentic system that processes customer support tickets might use generative AI to draft a response (content creation), then use agentic capabilities to look up the customer’s account history, check for open orders, and decide whether to escalate or resolve (multi-step decision-making with tool use).

A document processing pipeline might use generative AI to extract and summarize data from uploaded files, then use an agent to validate the extracted data against business rules, route exceptions for review, and update downstream systems.

A code review assistant might use generative AI to analyze a pull request and produce comments, then use agentic capabilities to check CI/CD results, cross-reference related tickets, and post the review only if the build passed. The generative piece writes the review. The agentic piece decides when and whether to deliver it.

The pattern: generative AI handles individual reasoning steps within the workflow. Agentic AI handles the orchestration, sequencing, and decision-making between those steps. The generative components are the muscles. The agentic layer is the brain that coordinates them.

This is worth internalizing because it affects how you structure your codebase. Your generative components (prompt templates, model configurations, output parsers) are reusable modules. Your agentic layer (orchestration logic, tool definitions, state management) is the application-specific code that wires them together.

Teams that start with generative AI features often migrate toward agentic systems over time. The summarization feature becomes a triage agent. The draft generator becomes an outbound workflow. This migration is natural, but it’s not incremental. Moving from generative to agentic usually requires rearchitecting, not refactoring. Plan for that transition if you see it coming.

A Decision Framework for Product Leaders

Before you commit engineering resources, answer four questions.

1. Does the task require multiple steps with decisions between them?

If the AI needs to produce a single output based on a single input, generative AI is sufficient. If the AI needs to produce an output, evaluate it, decide what to do next, and potentially take several more actions before the task is complete, you need agentic capabilities.

Example: generating a product description from attributes is generative. Processing a return request (check order status, verify return window, calculate refund, initiate the return, notify the customer) is agentic.

2. Does the AI need to interact with external systems?

If the model’s job is to produce text, code, or structured data that a human or another system consumes, generative AI is the right tool. If the model needs to call APIs, query databases, trigger workflows, or modify records, you’re in agentic territory.

The number and variety of tool integrations directly affects complexity. Two API calls in a predictable sequence is a simple agent. Fifteen tools with conditional logic is a significant engineering effort.

3. What happens when the AI is wrong?

If a wrong output is caught by a human reviewer before anything happens, you can tolerate higher error rates and invest less in guardrails. If a wrong output triggers an action that’s difficult to reverse (sending an email, modifying data, making a payment), you need extensive safety mechanisms.

Map out the blast radius of failure for your specific use case. That determines how much engineering investment goes into guardrails vs. core features.

4. Can you define “done” for the task?

Generative AI tasks have fuzzy completion criteria. Is this summary good enough? Is this draft ready? Quality is subjective and context-dependent.

Agentic AI tasks need clear success criteria. Did the order get processed? Did the ticket get routed to the right team? Did all five steps complete without error? If you can’t define what “done” looks like in concrete, measurable terms, an autonomous agent will struggle because it can’t evaluate its own performance.

The Decision That Compounds

Choosing between generative and agentic AI isn’t a one-time technical decision. It’s a strategic commitment that shapes your product’s architecture, your team’s composition, and your cost structure for the next 12 to 18 months.

Generative AI is faster to ship, cheaper to run, and easier to staff. If your use case fits, don’t over-engineer it. A well-built generative feature can reach production in two to four weeks with a small team. An agentic system with meaningful autonomy typically takes eight to twelve weeks, including the guardrail and observability work that most teams underestimate at the outset.

Agentic AI handles problems that generative AI can’t touch. If your users need multi-step task completion, autonomous workflows, or cross-system orchestration, generative AI will feel like a toy by comparison. The investment is higher, but so is the ceiling. Agentic systems automate entire job functions, not just individual tasks.

Most products will eventually need both. The question is which to build first, and that depends on where your users feel the most pain today. Start with the use case that has the clearest success criteria and the most measurable impact. Build the generative components first if the value is in content creation or data transformation. Build the agentic layer first if the value is in replacing a manual workflow that’s costing your team hours every week.

If you’re evaluating where agentic AI fits in your product roadmap, our team builds these systems inside existing product organizations. Same codebase, same standups, same release cycle. We can help you figure out which approach fits before you commit engineering resources to the wrong one.